Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. See the NOTICE file distributed with this work for additional information regarding copyright ownership. The ASF licenses this file to you under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

Revision History |

|

2.0.0 |

2016-05-25T19:15 |

Preface

This is the official reference guide for the Trafodion DCS (Database Connectivity Services), a distributed, ODBC, JDBC connectivity component of Trafodion, built on top of Apache ZooKeeper.

DCS is packaged within the trafodion-2.0.0.tar.gz file on the Trafodion download site. This document describes DCS version 2.0.0. Herein you will find either the definitive documentation on a DCS topic as of its standing when the referenced DCS version shipped, or it will point to the location in javadoc, where the pertinent information can be found.

This reference guide is a work in progress. The source for this guide can be found in the src/main/ascidoc directory of the DCS source.

Getting Started

1. Introduction

Quick Start will get you up and running on a single-node instance of DCS. Configuration describes setup of DCS in a multi-node configuration.

2. Quick Start

This guide describes setup of a single node DCS instance. It leads you through creating a configuration, and then starting up and shutting down your DCS instance. The below exercise should take no more than ten minutes (not including download time).

2.1. Download and unpack the latest release.

Decompress and untar your download and then change into the unpacked directory.

$ tar xzf dcs-{projectVersion}.tar.gz

$ cd dcs-{projectVersion}

Is java installed?

The steps in the following sections presume a 1.7 version of Oracle

java is installed on your machine and available on your path; i.e. when you type java, you see output that describes the

options the java program takes (DCS requires java 7 or better). If this is not the case, DCS will not start.

Install java, edit conf/dcs-env.sh, uncommenting the JAVA_HOME line pointing it to your java install.

Is Trafodion installed and running?

DCS presumes a Trafodion instance is installed and running on your machine and available on your path; i.e. the

MY_SQROOT is set and when you type sqcheck, you see output that confirms Trafodion is running. If this is not

the case, DCS may start but you’ll see many errors in the DcsServer logs related to user program startup.

At this point, you are ready to start DCS.

2.2. Starting DCS

Now start DCS:

$ bin/start-dcs.sh localhost: starting zookeeper, logging to /logs/dcs-user-1-zookeeper-hostname.out localhost: running Zookeeper starting master, logging to /logs/dcs-user-1-master-hostname.out localhost: starting server, logging to /logs/dcs-user-1-server-hostname.out

You should now have a running DCS instance. DCS logs can be found in the logs subdirectory. Peruse them especially if DCS had trouble starting.

2.3. Stopping DCS

Stop your DCS instance by running the stop script.

$ ./bin/stop-dcs.sh localhost: stopping server. stopping master. localhost: stopping zookeeper.

Where to go next?

The above described setup is good for testing and experiments only. Next move on to configuration where we’ll go into depth on the different requirements and critical configurations needed setting up a distributed DCS deploy.

Configuration

This chapter is the Not-So-Quick start guide to DCS configuration. Please read this chapter carefully and ensure that all requirements have been satisfied. Failure to do so will cause you (and us) grief debugging strange errors.

DCS uses the same configuration mechanism as Apache Hadoop. All configuration files are located in the conf/ directory.

|

Be careful editing XML. Make sure you close all elements. Run your file through xmllint or similar to ensure well-formedness of your document after an edit session. |

|

Keep Configuration In Sync Across the Cluster

After you make an edit to an DCS configuration file, make sure you copy the content of the conf directory to all nodes of the cluster. DCS will not do this for you. Use rsync, scp, or another secure mechanism for copying the configuration files to your nodes. A restart is needed for servers to pick up changes. |

This section lists required services and some required system configuration.

3. Java

| DCS Version | JDK 6 | JDK 7 | JDK 8 |

|---|---|---|---|

1.1 |

Not Supported |

yes |

Running with JDK 8 has not been tested. |

1.0 |

yes |

Not Supported |

Not Supported |

4. Operating System

4.1. ssh

ssh must be installed and sshd must be running to use DCS’s' scripts to manage remote DCS daemons. You must be able to ssh to all nodes, including your local node, using passwordless login (Google "ssh passwordless login").

4.2. DNS

Both forward and reverse DNS resolving should work. If your machine has multiple interfaces, DCS will use the interface that the primary hostname resolves to.

4.3. Loopback IP

DCS expects the loopback IP address to be 127.0.0.1. Ubuntu and some other distributions, for example, will default to 127.0.1.1 and this will cause problems for you. /etc/hosts should look something like this:

127.0.0.1 localhost

127.0.0.1 ubuntu.ubuntu-domain ubuntu

4.4. NTP

The clocks on cluster members should be in basic alignments. Some skew is tolerable but wild skew could generate odd behaviors. Run NTP on your cluster, or an equivalent.

4.5. Windows

DCS is not supported on Windows.

5. Run modes

5.1. Single Node

This is the default mode. Single node is what is described in the quickstart section. In single node, it runs all DCS daemons and a local ZooKeeper all on the same node. Zookeeper binds to a well known port.

5.2. Multi Node

Multi node is where the daemons are spread across all nodes in the cluster. Before proceeding, ensure you have a working Trafodion instance.

Below we describe the different setups. Starting, verification and exploration of your install. Configuration is described in a section that follows, Running and Confirming Your Installation.

To set up a multi-node deploy, you will need to configure DCS by editing files in the DCS conf directory.

You may need to edit conf/dcs-env.sh to tell DCS which java to use. In this file you set DCS environment

variables such as the heap size and other options for the JVM, the preferred location for log files,

etc. Set JAVA_HOME to point at the root of your java install.

5.2.1. servers

In addition, a multi-node deploy requires that you modify conf/servers. The servers file lists all hosts that you would have running DcsServers, one host per line or the host name followed by the number of master executor servers. All servers listed in this file will be started and stopped when DCS start or stop is run.

5.2.2. backup-masters

The backup-masters file lists all hosts that you would have running backup DcsMaster processes, one host per line. All servers listed in this file will be started and stopped when DCS start or stop is run.

5.2.3. master

The master file lists the host of the primary DcsMaster process. Only one host is allowed to be the primary master. The server listed in this file will be started and stopped when DCS start or stop is run.

5.2.4. ZooKeeper and DCS

See section Zookeeper for ZooKeeper setup for DCS.

5.3. Running and Confirming Your Installation

Make sure Trafodion is running first. Start and stop the Trafodion instance by running sqstart.sh over in the

MY_SQROOT/sql/scripts directory. You can ensure it started properly by testing with sqcheck.

If you are managing your own ZooKeeper, start it and confirm its running else, DCS will start up ZooKeeper

for you as part of its start process.

Start DCS with the following command:

bin/start-dcs.sh

Run the above from the DCS_HOME directory.

You should now have a running DCS instance. DCS logs can be found in the logs subdirectory. Check them out especially if DCS had trouble starting.

DCS also puts up a UI listing vital attributes and metrics. By default its deployed on the DcsMaster

host at port 24410 (DcsServers put up an informational http server at 24430+their instance number).

If the DcsMaster were running on a host named master.example.org on the default port, to see the

DcsMaster’s homepage you’d point your browser at http://master.example.org:24410.

To stop DCS after exiting the DCS shell enter

./bin/stop-dcs.sh stopping dcs...............

Shutdown can take a moment to complete. It can take longer if your cluster is comprised of many machines.

6. ZooKeeper

DCS depends on a running ZooKeeper cluster.All participating nodes and clients need to be able to access the

running ZooKeeper ensemble. DCS by default manages a ZooKeeper "cluster" for you. It will start and stop the ZooKeeper ensemble

as part of the DCS start/stop process. You can also manage the ZooKeeper ensemble independent of DCS and just point DCS at

the cluster it should use. To toggle DCS management of ZooKeeper, use the DCS_MANAGES_ZK variable in

conf/dcs-env.sh. This variable, which defaults to true, tells DCS whether to start/stop the ZooKeeper ensemble servers as part of DCS

start/stop.

When DCS manages the ZooKeeper ensemble, you can specify ZooKeeper configuration using its native

zoo.cfg file, or, the easier option is to just specify ZooKeeper options directly in

conf/dcs-site.xml. A ZooKeeper configuration option can be set as a property in the DCS

dcs-site.xml XML configuration file by prefacing the ZooKeeper option name with

dcs.zookeeper.property. For example, the clientPort setting in ZooKeeper can be changed

by setting the dcs.zookeeper.property.clientPort property. For all default values used by DCS, including ZooKeeper

configuration, see section DCS Default Configuration. Look for the dcs.zookeeper.property prefix

For the full list of ZooKeeper configurations, see ZooKeeper’s zoo.cfg. DCS does not ship with a zoo.cfg so you will need to browse

the conf directory in an appropriate ZooKeeper download.

You must at least list the ensemble servers in dcs-site.xml using the dcs.zookeeper.quorum property. This property

defaults to a single ensemble member at localhost which is not suitable for a fully distributed DCS.

(It binds to the local machine only and remote clients will not be able to connect).

How many ZooKeepers should I run?

You can run a ZooKeeper ensemble that comprises 1 node only but in production it is recommended that you run a ZooKeeper ensemble of 3, 5 or 7 machines; the more members a nensemble has, the more tolerant the ensemble is of host failures. Also, run an odd number of machines. In ZooKeeper, an even number of peers is supported, but it is normally not used because an even sized ensemble requires, proportionally, more peers to form a quorum than an odd sized ensemble requires. For example, an ensemble with 4 peers requires 3 to form a quorum, while an ensemble with 5 also requires 3 to form a quorum. Thus, an ensemble of 5 allows 2 peers to fail, and thus is more fault tolerant than the ensemble of 4, which allows only 1 down peer.

Give each ZooKeeper server around 1GB of RAM, and if possible, its own dedicated disk (A dedicated disk is the best thing you can do to ensure a performant ZooKeeper ensemble). For very heavily loaded clusters, run ZooKeeper servers on separate machines from DcsServers.

For example, to have DCS manage a ZooKeeper quorum on nodes host{1,2,3,4,5}.example.com, bound to

port 2222 (the default is 2181) ensure DCS_MANAGE_ZK is commented out or set to true in conf/dcs-env.sh

and then edit conf/dcs-site.xml and set dcs.zookeeper.property.clientPort and

dcs.zookeeper.quorum. You should also set dcs.zookeeper.property.dataDir to other than

the default as the default has ZooKeeper persist data under /tmp which is often cleared on system

restart. In the example below we have ZooKeeper persist to /user/local/zookeeper.

<configuration>

...

<property>

<name>dcs.zookeeper.property.clientPort</name>

<value>2222</value>

<description>Property from ZooKeeper's config zoo.cfg.

The port at which the clients will connect.

</description>

</property>

<property>

<name>dcs.zookeeper.quorum</name>

<value>

host1.example.com,host2.example.com,host3.example.com,host4.example.com,host5.example.com

</value>

<description>Comma separated list of servers in the ZooKeeper Quorum.

For example, "host1.mydomain.com,host2.mydomain.com,host3.mydomain.com".

By default this is set to localhost. For a multi-node setup, this should be set to a full

list of ZooKeeper quorum servers. If DCS_MANAGES_ZK=true set in dcs-env.sh

this is the list of servers which we will start/stop ZooKeeper on.

</description>

</property>

<property>

<name>dcs.zookeeper.property.dataDir</name>

<value>/usr/local/zookeeper</value>

<description>Property from ZooKeeper's config zoo.cfg.

The directory where the snapshot is stored.

</description>

</property>

...

</configuration>6.1. Using existing ZooKeeper ensemble

To point DCS at an existing ZooKeeper cluster, one that is not managed by DCS, uncomment and set DCS_MANAGES_ZK

in conf/dcs-env.sh to false

# Tell DCS whether it should manage it's own instance of Zookeeper or not.

export DCS_MANAGES_ZK=falseNext set ensemble locations and client port, if non-standard, in dcs-site.xml, or add a suitably configured zoo.cfg to DCS’s CLASSPATH. DCS will prefer the configuration found in zoo.cfg over any settings in dcs-site.xml.

When DCS manages ZooKeeper, it will start/stop the ZooKeeper servers as a part of the regular start/stop scripts. If you would like to run ZooKeeper yourself, independent of DCS start/stop, you would do the following

${DCS_HOME}/bin/dcs-daemons.sh {start,stop} zookeeperNote that you can use DCS in this manner to start up a

ZooKeeper cluster, unrelated to DCS. Just make sure to uncomment and set

DCS_MANAGES_ZK to false if you want it to stay up across DCS restarts so that when

DCS shuts down, it doesn’t take ZooKeeper down with it.

For more information about running a distinct ZooKeeper cluster, see the ZooKeeper Getting Started Guide. Additionally, see the ZooKeeper Wiki or the ZooKeeper documentation for more information on ZooKeeper sizing.

7. Configuration Files

7.1. dcs-site.xml and dcs-default.xml

You add site-specific configuration to the dcs-site.xml file, for DCS, site specific customizations go into the file conf/dcs-site.xml. For the list of configurable properties, see DCS Default Configuration below or view the raw dcs-default.xml source file in the DCS source code at src/main/resources.

Not all configuration options make it out to dcs-default.xml. Configuration that it is thought rare anyone would change can exist only in code; the only way to turn up such configurations is via a reading of the source code itself.

Currently, changes here will require a cluster restart for DCS to notice the change.

7.2. DCS Default Configuration

The documentation below is generated using the default dcs configuration file, dcs-default.xml, as source.

dcs.tmp.dir-

Description

Temporary directory on the local filesystem. Change this setting to point to a location more permanent than '/tmp' (The '/tmp' directory is often cleared on machine restart).

Default${java.io.tmpdir}/dcs-${user.name}

dcs.local.dir-

Description

Directory on the local filesystem to be used as a local storage.

Default${dcs.tmp.dir}/local/

dcs.master.port-

Description

Default DCS port.

Default23400

dcs.master.port.range-

Description

Default range of ports.

Default100

dcs.master.info.port-

Description

The port for the Dcs Master web UI. Set to -1 if you do not want a UI instance run.

Default24400

dcs.master.info.bindAddress-

Description

The bind address for the DcsMaster web UI

Default0.0.0.0

dcs.master.server.restart.handler.attempts-

Description

Maximum number of times the DcsMaster restart handler will try to restart the DcsServer.

Default3

dcs.master.server.restart.handler.retry.interval.millis-

Description

Interval between Server restart handler retries.

Default1000

dcs.master.listener.request.timeout-

Description

Listener Request timeout. Default 30 seconds.

Default30000

dcs.master.listener.selector.timeout-

Description

Listener Selector timeout. Default 10 seconds.

Default10000

dcs.server.user.program.max.heap.pct.exit-

Description

Set this value to a percentage of the initial heap size (for example, value: 80), which you do not want the current heap size to exceed. When the Trafodion session disconnects, the DCS server’s user program checks its current heap size. If the difference between its current and initial heap sizes exceeds this percentage, the user program will exit, and the DCS server will restart it. If the difference between its current and initial heap sizes does not exceed this percentage, the user program will be allowed to keep running. The default is 0, which means that the heap size is not checked after the session disconnects and the user program keeps running.

Default0

dcs.server.user.program.zookeeper.session.timeout-

Description

User program ZooKeeper session timeout. Default 180 seconds.

Default180

dcs.server.user.program.exit.after.disconnect-

Description

User program calls exit() after client disconnect. Default is 0 or don’t 'disconnect after exit. Really only for developer use.

Default0

dcs.server.info.port-

Description

The port for the DcsServer web UI Set to -1 if you do not want the server UI to run.

Default40030

dcs.server.info.bindAddress-

Description

The address for the DcsServer web UI

Default0.0.0.0

dcs.server.info.port.auto-

Description

Whether or not the DcsServer UI should search for a port to bind to. Enables automatic port search if dcs.server.info.port is already in use.

Defaulttrue

dcs.dns.interface-

Description

Dcs uses the local host name for reporting its IP address. If your machine has multiple interfaces DCS will use the interface that the primary host name resolves to. If this is insufficient, you can set this property to indicate the primary interface e.g., "eth1". This only works if your cluster configuration is consistent and every host has the same network interface configuration.

Defaultdefault

dcs.info.threads.max-

Description

The maximum number of threads of the info server thread pool. Threads in the pool are reused to process requests. This controls the maximum number of requests processed concurrently. It may help to control the memory used by the info server to avoid out of memory issues. If the thread pool is full, incoming requests will be queued up and wait for some free threads. The default is 100.

Default100

dcs.info.threads.min-

Description

The minimum number of threads of the info server thread pool. The thread pool always has at least these number of threads so the info server is ready to serve incoming requests. The default is 2.

Default2

dcs.server.handler.threads.max-

Description

For every DcsServer specified in the conf/servers file the maximum number of server handler threads that will be created. There can never be more than this value for any given DcsServer. The default is 10.

Default10

dcs.zookeeper.dns.interface-

Description

The name of the Network Interface from which a ZooKeeper server should report its IP address.

Defaultdefault

dcs.zookeeper.dns.nameserver-

Description

The host name or IP address of the name server (DNS) which a ZooKeeper server should use to determine the host name used by the master for communication and display purposes.

Defaultdefault

dcs.server.versionfile.writeattempts-

Description

How many time to retry attempting to write a version file before just aborting. Each attempt is seperated by the dcs.server.thread.wakefrequency milliseconds.

Default3

zookeeper.session.timeout-

Description

ZooKeeper session timeout. dcs passes this to the zk quorum as suggested maximum time for a session (This setting becomes zookeeper’s 'maxSessionTimeout'). See http://hadoop.apache.org/zookeeper/docs/current/zookeeperProgrammers.html#ch_zkSessions "The client sends a requested timeout, the server responds with the timeout that it can give the client. " In milliseconds.

Default180000

zookeeper.znode.parent-

Description

Root Znode for dcs in ZooKeeper. All of dcs’s ZooKeeper znodes that are configured with a relative path will go under this node. By default, all of dcs’s ZooKeeper file path are configured with a relative path, so they will all go under this directory unless changed.

Default/${user.name}

dcs.zookeeper.quorum-

Description

Comma separated list of servers in the ZooKeeper Quorum. For example, "host1.mydomain.com,host2.mydomain.com,host3.mydomain.com". By default this is set to localhost. For a fully-distributed setup, this should be set to a full list of ZooKeeper quorum servers. If DCS_MANAGES_ZK is set in dcs-env.sh this is the list of servers which we will start/stop ZooKeeper on.

Defaultlocalhost

dcs.zookeeper.peerport-

Description

Port used by ZooKeeper peers to talk to each other. See http://hadoop.apache.org/zookeeper/docs/r3.1.1/zookeeperStarted.html#sc_RunningReplicatedZooKeeper for more information.

Default2888

dcs.zookeeper.leaderport-

Description

Port used by ZooKeeper for leader election. See http://hadoop.apache.org/zookeeper/docs/r3.1.1/zookeeperStarted.html#sc_RunningReplicatedZooKeeper for more information.

Default3888

dcs.zookeeper.useMulti-

Description

Instructs DCS to make use of ZooKeeper’s multi-update functionality. This allows certain ZooKeeper operations to complete more quickly and prevents some issues with rare ZooKeeper failure scenarios (see the release note of HBASE-6710 for an example). IMPORTANT: only set this to true if all ZooKeeper servers in the cluster are on version 3.4+ and will not be downgraded. ZooKeeper versions before 3.4 do not support multi-update and will not fail gracefully if multi-update is invoked (see ZOOKEEPER-1495).

Defaultfalse

dcs.zookeeper.property.initLimit-

Description

Property from ZooKeeper’s config zoo.cfg. The number of ticks that the initial synchronization phase can take.

Default10

dcs.zookeeper.property.syncLimit-

Description

Property from ZooKeeper’s config zoo.cfg. The number of ticks that can pass between sending a request and getting an acknowledgment.

Default5

dcs.zookeeper.property.dataDir-

Description

Property from ZooKeeper’s config zoo.cfg. The directory where the snapshot is stored.

Default${dcs.tmp.dir}/zookeeper

dcs.zookeeper.property.clientPort-

Description

Property from ZooKeeper’s config zoo.cfg. The port at which the clients will connect.

Default2181

dcs.zookeeper.property.maxClientCnxns-

Description

Property from ZooKeeper’s config zoo.cfg. Limit on number of concurrent connections (at the socket level) that a single client, identified by IP address, may make to a single member of the ZooKeeper ensemble. Set high to avoid zk connection issues running standalone and pseudo-distributed.

Default300

dcs.server.user.program.statistics.interval.time-

Description

Time in seconds on how often the aggregation data should be published. Setting this value to '0' will revert to default. Setting this value to '-1' will disable publishing aggregation data. The default is 60.

Default60

dcs.server.user.program.statistics.limit.time-

Description

Time in seconds for how long the query has been executing before publishing statistics to metric_query_table. To publish all queries set this value to '0'. Setting this value to '-1' will disable publishing any data to metric_query_table. The default is 60. Warning - Setting this value to 0 will cause query performance to degrade

Default60

dcs.server.user.program.statistics.type-

Description

Type of statistics to be published. User can set it as 'session' or 'aggregated'. By 'aggregated', only session stats and aggregation stats will be published and query stats will be published only when query executes longer than specified time limit using the property 'dcs.server.user.program.statistics.limit.time'. By 'session', only session stats will be published. The default is 'aggregated'.

Defaultaggregated

dcs.server.user.program.statistics.enabled-

Description

If statistics publication is enabled. The default is true. Set false to disable.

Defaulttrue

dcs.server.class.name-

Description

The classname of the DcsServer to start. Used for development of multithreaded server

Defaultorg.trafodion.dcs.server.DcsServer

7.3. dcs-env.sh

Set DCS environment variables in this file. Examples include options to pass the JVM on start of an DCS daemon such as heap size and garbage collector configs. You can also set configurations for DCS configuration, log directories, niceness, ssh options, where to locate process pid files, etc. Open the file at conf/dcs-env.sh and peruse its content. Each option is fairly well documented. Add your own environment variables here if you want them read by DCS daemons on startup.

Changes here will require a cluster restart for DCS to notice the change.

7.4. log4j.properties

Edit this file to change rate at which DCS files are rolled and to change the level at which DCS logs messages.

Changes here will require a cluster restart for DCS to notice the change though log levels can be changed for particular daemons via the DCS UI.

7.5. master

A plain-text file which lists host on which the primary master process should be started. Only one host allowed to be the primary master

7.6. backup-masters

A plain-text file which lists hosts on which the backup master process should be started. Only one host per line is allowed

8. Example Configurations

8.1. Basic Distributed DCS Install

This example shows a basic configuration for a distributed four-node cluster. The nodes are named

example1,example2, and so on, through node`example4` in this example. The DCS Master is configured to run

on node example4. DCS Servers run on nodes example1-example4. A 3-node ZooKeeper ensemble runs on example1,

example2, and example3 on the default ports. ZooKeeper data is persisted to the directory

/export/zookeeper. Below we show what the main configuration files, dcs-site.xml,

servers, and dcs-env.sh, found in the DCS conf directory might look like.

8.1.1. dcs-site.xml

<configuration>

<property>

<name>dcs.zookeeper.quorum</name>

<value>example1,example2,example3</value>

<description>

The directory shared by DcsServers.

</description>

</property>

<property>

<name>dcs.zookeeper.property.dataDir</name>

<value>/export/zookeeper</value>

<description>

Property from ZooKeeper's config zoo.cfg.

The directory where the snapshot is stored.

</description>

</property>

</configuration>8.1.2. servers

In this file, you list the nodes that will run DcsServers. In this case, there are two DcsServrs per node each starting a single mxosrvr:

example1

example2

example3

example4

example1

example2

example3

example4Alternatively, you can list the nodes followed by the number of mxosrvrs:

example1 2

example2 2

example3 2

example4 28.1.3. master

In this file, you list the node that will run primary DcsMasters.

example48.1.4. backup-masters

In this file, you list the nodes that will run backup DcsMasters. In this case, there is a backup master running on the second node:

example28.1.5. dcs-env.sh

Below we use a diff to show the differences from default in the dcs-env.sh file. Here we are setting the DCS heap to be 4G instead of the default 128M.

$ git diff dcs-env.sh

diff --git a/conf/dcs-env.sh b/conf/dcs-env.sh

index e70ebc6..96f8c27 100644

--- a/conf/dcs-env.sh

+++ b/conf/dcs-env.sh

@@ -31,7 +31,7 @@ export JAVA_HOME=/usr/java/jdk1.7.0/

# export DCS_CLASSPATH=

# The maximum amount of heap to use, in MB. Default is 128.

-# export DCS_HEAPSIZE=128

+export DCS_HEAPSIZE=4096

# Extra Java runtime options.

# Below are what we set by default. May only work with SUN JVM.Use rsync to copy the content of the conf directory to all nodes of the cluster.

9. High Availability(HA) Configuration

The master configuration file for DcsMaster may be configured by adding the host name to the conf/master file. If the master is configured to start on the remote node then, during start of dcs the primary master will be started on the remote node. If the conf/master file is empty then the primary master will be started on the host where the dcs start script was run. Similarly, DcsMaster backup servers may be configured by adding host names to the conf/backup-masters file. They are started and stopped automatically by the bin/master-backup.sh script whenever DCS is started or stopped. Every backup DcsMaster follows the current leader DcsMaster watching for it to fail. If failure of the leader occurs, first backup DcsMaster in line for succession checks to see if floating IP is enabled. If enabled it executes the bin/scripts/dcsbind.sh script to add a floating IP address to an interface on its node. It then continues with normal initialization and eventually starts listening for new client connections. It may take several seconds for the take over to complete. When a failed node is restored a new DcsMaster backup may be started manually by executing the bin/dcs-daemon.sh script on the restored node.

>bin/dcs-daemon.sh start master

The newly created DcsMaster backup process will take its place at the back of the line waiting for the current DcsMaster leader to fail.

9.1. dcs.master.port

The default value is 23400. This is the port the DcsMaster listener binds to waiting for JDBC/ODBC T4 client connections. The value may need to be changed if this port number conflicts with other ports in use on your cluster.

To change this configuration, edit conf/dcs-site.xml, copy the changed file around the cluster and restart.

9.2. dcs.master.port.range

The default value is 100. This is the total number of ports that MXOSRVRs will scan trying to find an available port to use. You must ensure the value is large enough to support the number of MXOSRVRs configured in conf/servers.

9.3. dcs.master.floating.ip

The default value is false. When set to true the floating IP feature in the DcsMaster is enabled via the bin/dcsbind.sh script. This allows backup DcsMaster to take over and set the floating IP address.

9.4. dcs.master.floating.ip.external.interface

There is no default value. You must ensure the value contains the correct interface for your network configuration.

9.5. dcs.master.floating.ip.external.ip.address

There is no default value. It is important that you set this to the dotted IP address appropriate for your network.

To change this configuration, edit dcs-site.xml, copy the changed file to all nodes in the cluster and restart dcs.

Architecture

10. Overview

10.1. DCS

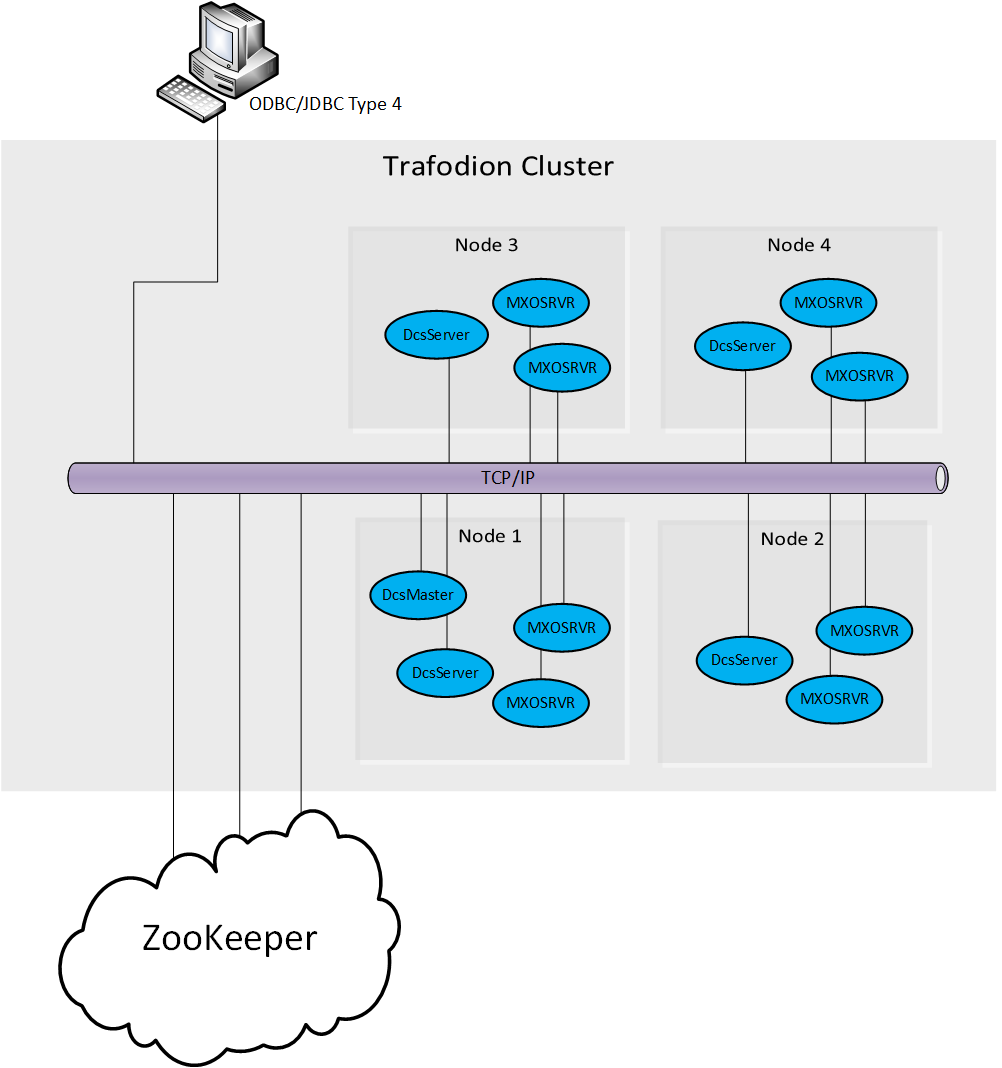

DCS, see figure Figure 1, is a framework that connects ODBC/JDBC Type 4 clients to Trafodion user programs (MXOSRVR servers). In a nutshell, clients connect to a listening DcsMaster on a well known port. DcsMaster looks in ZooKeeper for an "available" DcsServer user program (MXOSRVR) and returns an object reference to that server back to the client. The client then connects directly to the MXOSRVR. After the initial startup DcsMaster restarts any failed DcsServers. And, DcsServers restart any failed MXOSRVRs.

DCS provides the following:

-

A lightweight process management framework.

-

High performance client listener using Java NIO.

-

Simple configuration and startup

-

A highly available and scaleable Trafodion connectivity service.

-

Uses ZooKeeper as backbone for coordination and process management.

-

Embedded user interface to examine state, logs, process status.

-

Standalone REST server.

-

100% Java implementation.

11. Client

The Trafodion ODBC/JDBC Type 4 client drivers connect to MXOSRVRs through the DCS Master.

12. DcsMaster

DcsMaster is the implementation of the Master Server. The Master server is responsible for listening for client connection requests, monitoring all DcsServer instances in the cluster and restarting any DcsServers that fail after initial startup.

12.1. Startup Behavior

The DcsMaster is started via the scripts found in the /bin directory. During startup it registers itself in Zookeeper. If the DcsMaster is started as a backup then after registering in Zookeeper it waits to become the next DcsMaster leader. If ever the backup becomes the leader it executes the bin/dcsbind.sh script to enable floating IP on a given interface and IP address.

12.2. Runtime Impact

A common question is what happens to an DCS cluster when the DcsMaster goes down. Because the DcsMaster doesn’t affect the running DcsServers or connected clients, the cluster can still function i.e., clients already connected to MXOSRVRs can continue to work. However, the DcsMaster controls critical functions such as listening for clients and restarting DcsServers. So, while the cluster can still run for a time without the DcsMaster, it should be restarted as soon as possible.

12.3. High Availability

Please refer to section High Availability

12.4. Processes

The DcsMaster runs several background threads:

12.4.1. Listener

The listener thread is responsible for servicing client requests. It pairs each client with an registered MXOSRVR found in Zookeeper. A default port is configured but this may be changed in the configuration by modifying the dcs.master.port and dcs.master.port.range properties.

12.4.2. ServerManager

The server manager thread is responsible for monitoring and restarting its child DcsServers. It runs a server handler for each DcsServer found in conf/servers.

13. DcsServer

DcsServer is the server implementation. It is responsible for starting and keeping its Trafodion user program (MXOSRVR) running.

13.1. Startup Behavior

The DcsServer is started via the scripts found in the /bin directory. During startup it registers itself in Zookeeper.

13.2. Runtime Impact

The DcsServer can continue to function if the DcsMaster goes down. the cluster can still function in a "steady state." However, the DcsMaster controls critical functions such as DcsServer failure and. So while the cluster can still run for a time without the DcsMaster, the DcsMaster should be restarted as soon as possible.

13.3. Processes

The DcsServer runs a variety of background threads:

13.3.1. ServerManager

The server manager thread is responsible for monitoring and restarting its child MXOSRVRs. It runs a server handler for each MXOSRVR found after the hostname in conf/servers.

13.3.2. ScriptManager

The script manager thread is responsible for readng and compiling the script used to run the MXOSRVR. It can detect a change in any script found in bin/scripts. If any file changes it will reload and compile the changed script.

Performance Tuning

14. Operating System

14.1. Memory

RAM, RAM, RAM. Don’t starve Dcs.

14.2. 64-bit

Use a 64-bit platform (and 64-bit JVM).

14.3. Swapping

Watch out for swapping. Set swappiness to 0.

15. Network

16. ZooKeeper

See Zookeeper for information on configuring ZooKeeper, and see the part about having a dedicated disk.

Troubleshooting and Debugging

17. General Guidelines

18. Logs

The key process logs are as follows…(replace <user> with the user that started the service, <instance> for the server instance and <hostname> for the machine name)

-

DcsMaster: $DCS_HOME/logs/dcs-<user>-<instance>-master-<hostname>.log

-

DcsServer: $DCS_HOME/logs/dcs-<user>-<instance>-server-<hostname>.log

-

ZooKeeper: $DCS_HOME/logs/dcs-<user>-<instance>-zookeeper-<hostname>.log

19. Resources

20. Tools

20.1. Builtin Tools

20.1.1. DcsMaster Web Interface

The DcsMaster starts a web-interface on port 24400 by default.

The DcsMaster web UI lists created DcsServers (e.g., build info, zookeeper quorum, metrics, etc.). Additionally, the available DcsServers in the cluster are listed along with selected high-level metrics (listenerRequests, listenerCompletedRequests, totalAvailable/totalConnected/totalConnecting MXOSRVRs, totalHeap, usedHeap, maxHeap, etc). The DcsMaster web UI allows navigation to each DcsServer’s web UI.

20.1.2. DcsServer Web Interface

DcsServers starts a web-interface on port 24420 by default.

The DcsServer web UI lists its server metrics (build info, zookeeper quorum, usedHeap, maxHeap, etc.).

20.1.3. zkcli

zkcli is a very useful tool for investigating ZooKeeper-related issues. To invoke:

./dcs zkcli -server host:port <cmd> <args> The commands (and arguments) are: connect host:port get path [watch] ls path [watch] set path data [version] delquota [-n|-b] path quit printwatches on|off create [-s] [-e] path data acl stat path [watch] close ls2 path [watch] history listquota path setAcl path acl getAcl path sync path redo cmdno addauth scheme auth delete path [version] setquota -n|-b val path

20.2. External Tools

20.2.1. tail

tail is the command line tool that lets you look at the end of a file. Add the “-f” option and it will refresh when new data is available. It’s useful when you are wondering what’s happening, for example, when a cluster is taking a long time to shutdown or startup as you can just fire a new terminal and tail the master log (and maybe a few DcsServers).

20.2.2. top

top is probably one of the most important tool when first trying to see what’s running on a machine and how the resources are consumed. Here’s an example from production system:

top - 14:46:59 up 39 days, 11:55, 1 user, load average: 3.75, 3.57, 3.84 Tasks: 309 total, 1 running, 308 sleeping, 0 stopped, 0 zombie Cpu(s): 4.5%us, 1.6%sy, 0.0%ni, 91.7%id, 1.4%wa, 0.1%hi, 0.6%si, 0.0%st Mem: 24414432k total, 24296956k used, 117476k free, 7196k buffers Swap: 16008732k total, 14348k used, 15994384k free, 11106908k cached PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND 15558 hadoop 18 -2 3292m 2.4g 3556 S 79 10.4 6523:52 java 13268 hadoop 18 -2 8967m 8.2g 4104 S 21 35.1 5170:30 java 8895 hadoop 18 -2 1581m 497m 3420 S 11 2.1 4002:32 java …

Here we can see that the system load average during the last five minutes is 3.75, which very roughly means that on average 3.75 threads were waiting for CPU time during these 5 minutes. In general, the “perfect” utilization equals to the number of cores, under that number the machine is under utilized and over that the machine is over utilized. This is an important concept, see this article to understand it more: http://www.linuxjournal.com/article/9001. Apart from load, we can see that the system is using almost all its available RAM but most of it is used for the OS cache (which is good). The swap only has a few KBs in it and this is wanted, high numbers would indicate swapping activity which is the nemesis of performance of Java systems. Another way to detect swapping is when the load average goes through the roof (although this could also be caused by things like a dying disk, among others). The list of processes isn’t super useful by default, all we know is that 3 java processes are using about 111% of the CPUs. To know which is which, simply type “c” and each line will be expanded. Typing “1” will give you the detail of how each CPU is used instead of the average for all of them like shown here.

20.2.3. jps

jps is shipped with every JDK and gives the java process ids for the current user (if root, then it gives the ids for all users). Example:

21160 DcsMaster 21248 DcsServer

21. ZooKeeper

21.1. Startup Errors

21.1.1. Could not find my address: xyz in list of ZooKeeper quorum servers

A ZooKeeper server wasn’t able to start, throws that error. xyz is the name of your server. This is a name lookup problem. DCS tries to start a ZooKeeper server on some machine but that machine isn’t able to find itself in the dcs.zookeeper.quorum configuration.

Use the hostname presented in the error message instead of the value you used. If you have a DNS server, you can set dcs.zookeeper.dns.interface and dcs.zookeeper.dns.nameserver in dcs-site.xml to make sure it resolves to the correct FQDN.

21.1.2. ZooKeeper, The Cluster Canary

ZooKeeper is the cluster’s "canary in the mineshaft". It’ll be the first to notice issues if any so making sure its happy is the short-cut to a humming cluster.

See the ZooKeeper Operating Environment Troubleshooting page. It has suggestions and tools for checking disk and networking performance; i.e. the operating environment your ZooKeeper and DCS are running in.

Additionally, the utility zkcli may help investigate ZooKeeper issues.

Operational Management

22. Tools and Utilities

Here we list tools for administration, analysis, and debugging.

22.1. DcsMaster and mxosrvr unable to communicate via the interface specified in conf/_dcs_site.xml

Symptoms are: When connection are viewed using DCS webUI, the server will be in "CONNECTING" state and the state does not change to "CONNECTED".

When such issues are seen, validate network communication works by using the linux utility 'netcat(nc)'command.

From the first node, type 'nc -l <any port number>'. This utility is now running in server mode listening for incoming connections on the specified port. From the second node, type ‘nc <external IP of the first node> <the listening port specified on the first node>’. Start entering some text on the client node and hit enter. The message you typed should reach the server on the first node. To exit , Press Ctrl-D , both the client and server will exit.

Another test would be to enable verbose when using ssh by using the public or private IP address

ssh -v <private IP address OR public IP address>

The third test would be to use linux tool 'traceroute'

traceroute <privateIP or public IP address>

Appendix

Appendix A: Contributing to Documentation

The DCS project welcomes contributions to the reference guide.

A.1. DCS Reference Guide Style Guide and Cheat Sheet

The DCS Reference Guide is written in Asciidoc and built using AsciiDoctor. The following cheat sheet is included for your reference. More comprehensive documentation is available in the AsciiDoctor user manual.

| Element Type | Desired Rendering | How to do it |

|---|---|---|

A paragraph |

a paragraph |

Just type some text with a blank line at the top and bottom. |

Add line breaks within a paragraph without adding blank lines |

Manual line breaks |

This will break + at the plus sign. Or prefix the whole paragraph with a line containing |

Give a title to anything |

Colored italic bold differently-sized text |

|

In-Line Code or commands |

monospace |

`text` |

In-line literal content (things to be typed exactly as shown) |

bold mono |

*`typethis`* |

In-line replaceable content (things to substitute with your own values) |

bold italic mono |

*_typesomething_* |

Code blocks with highlighting |

monospace, highlighted, preserve space |

[source,java]

----

myAwesomeCode() {

}

----

|

Code block included from a separate file |

included just as though it were part of the main file |

[source,ruby] ---- include\::path/to/app.rb[] ---- |

Include only part of a separate file |

Similar to Javadoc |

|

Filenames, directory names, new terms |

italic |

_dcs-default.xml_ |

External naked URLs |

A link with the URL as link text |

link:http://www.google.com |

External URLs with text |

A link with arbitrary link text |

link:http://www.google.com[Google] |

Create an internal anchor to cross-reference |

not rendered |

[[anchor_name]] |

Cross-reference an existing anchor using its default title |

an internal hyperlink using the element title if available, otherwise using the anchor name |

<<anchor_name>> |

Cross-reference an existing anchor using custom text |

an internal hyperlink using arbitrary text |

<<anchor_name,Anchor Text>> |

A block image |

The image with alt text |

image::sunset.jpg[Alt Text] (put the image in the src/main/site/resources/images directory) |

An inline image |

The image with alt text, as part of the text flow |

image:sunset.jpg [Alt Text] (only one colon) |

Link to a remote image |

show an image hosted elsewhere |

image::http://inkscape.org/doc/examples/tux.svg[Tux,250,350] (or |

Add dimensions or a URL to the image |

depends |

inside the brackets after the alt text, specify width, height and/or link="http://my_link.com" |

A footnote |

subscript link which takes you to the footnote |

Some text.footnote:[The footnote text.] |

A note or warning with no title |

The admonition image followed by the admonition |

NOTE:My note here WARNING:My warning here |

A complex note |

The note has a title and/or multiple paragraphs and/or code blocks or lists, etc |

.The Title [NOTE] ==== Here is the note text. Everything until the second set of four equals signs is part of the note. ---- some source code ---- ==== |

Bullet lists |

bullet lists |

* list item 1 |

Numbered lists |

numbered list |

. list item 2 |

Checklists |

Checked or unchecked boxes |

Checked: - [*] Unchecked: - [ ] |

Multiple levels of lists |

bulleted or numbered or combo |

. Numbered (1), at top level * Bullet (2), nested under 1 * Bullet (3), nested under 1 . Numbered (4), at top level * Bullet (5), nested under 4 ** Bullet (6), nested under 5 - [x] Checked (7), at top level |

Labelled lists / variablelists |

a list item title or summary followed by content |

Title:: content Title:: content |

Sidebars, quotes, or other blocks of text |

a block of text, formatted differently from the default |

Delimited using different delimiters, see http://asciidoctor.org/docs/user-manual/#built-in-blocks-summary. Some of the examples above use delimiters like ...., ----,====. [example] ==== This is an example block. ==== [source] ---- This is a source block. ---- [note] ==== This is a note block. ==== [quote] ____ This is a quote block. ____ If you want to insert literal Asciidoc content that keeps being interpreted, when in doubt, use eight dots as the delimiter at the top and bottom. |

Nested Sections |

chapter, section, sub-section, etc |

= Book (or chapter if the chapter can be built alone, see the leveloffset info below) == Chapter (or section if the chapter is standalone) === Section (or subsection, etc) ==== Subsection and so on up to 6 levels (think carefully about going deeper than 4 levels, maybe you can just titled paragraphs or lists instead). Note that you can include a book inside another book by adding the |

Include one file from another |

Content is included as though it were inline |

include::[/path/to/file.adoc] For plenty of examples. see book.adoc. |

A table |

a table |

See http://asciidoctor.org/docs/user-manual/#tables. Generally rows are separated by newlines and columns by pipes |

Comment out a single line |

A line is skipped during rendering |

|

Comment out a block |

A section of the file is skipped during rendering |

//// Nothing between the slashes will show up. //// |

Highlight text for review |

text shows up with yellow background |

Test between #hash marks# is highlighted yellow. |

A.2. Auto-Generated Content

Some parts of the DCS Reference Guide, most notably DCS default configuration, are generated automatically, so that this area of the documentation stays in sync with the code. This is done by means of an XSLT transform, which you can examine in the source at src/main/xslt/configuration_to_asciidoc_chapter.xsl. This transforms the dcs-common/src/main/resources/dcs-default.xml file into an Asciidoc output which can be included in the Reference Guide. Sometimes, it is necessary to add configuration parameters or modify their descriptions. Make the modifications to the source file, and they will be included in the Reference Guide when it is rebuilt.

It is possible that other types of content can and will be automatically generated from DCS source files in the future.

A.3. Images in the DCS Reference Guide

You can include images in the DCS Reference Guide. It is important to include an image title if possible, and alternate text always. This allows screen readers to navigate to the image and also provides alternative text for the image. The following is an example of an image with a title and alternate text. Notice the double colon.

.My Image Title

image::sunset.jpg[Alt Text]Here is an example of an inline image with alternate text. Notice the single colon. Inline images cannot have titles. They are generally small images like GUI buttons.

image:sunset.jpg[Alt Text]When doing a local build, save the image to the src/main/site/resources/images/ directory. When you link to the image, do not include the directory portion of the path. The image will be copied to the appropriate target location during the build of the output.

A.4. Adding a New Chapter to the DCS Reference Guide

If you want to add a new chapter to the DCS Reference Guide, the easiest way is to copy an existing chapter file, rename it, and change the ID (in double brackets) and title. Chapters are located in the src/main/asciidoc/_chapters/ directory.

Delete the existing content and create the new content.

Then open the src/main/asciidoc/book.adoc file, which is the main file for the DCS Reference Guide, and copy an existing include element to include your new chapter in the appropriate location.

Be sure to add your new file to your Git repository before creating your patch.

When in doubt, check to see how other files have been included.

A.5. Common Documentation Issues

The following documentation issues come up often. Some of these are preferences, but others can create mysterious build errors or other problems.

- Syntax Highlighting

-

The DCS Reference Guide uses

coderayfor syntax highlighting. To enable syntax highlighting for a given code listing, use the following type of syntax:[source,xml] ---- <name>My Name</name> ----

Several syntax types are supported. The most interesting ones for the DCS Reference Guide are

java,xml,sql, andbash.

Appendix B: FAQ

-

What is DCS?

See the architecture overview in the Architecture chapter.

-

How can I get started with my first cluster?

See the quickstart.

-

Where can I learn about the rest of the configuration options?

See the configuration section.

-

How can I improve DCS cluster performance?

See the performance section.

-

How can I troubleshoot my DCS cluster?

See the troubleshooting section.

-

How do I manage my DCS cluster?

See the operations management section.